AI Discipline

Lower Deployment Threshold for Multimodal AI Large Models, Enhance On-Device Training Efficiency, and Provide Comprehensive Support from Model Implementation to Application Validation

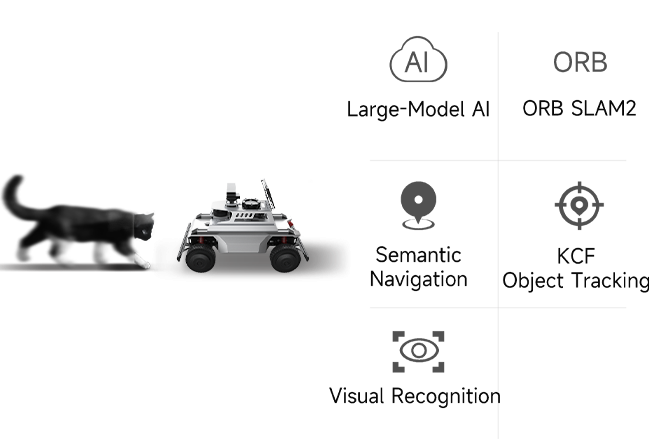

Powered by NVIDIA AI, OS-nano fuses visual and voice perception for environment understanding and enables autonomous task execution for a wide range of embodied robot applications.

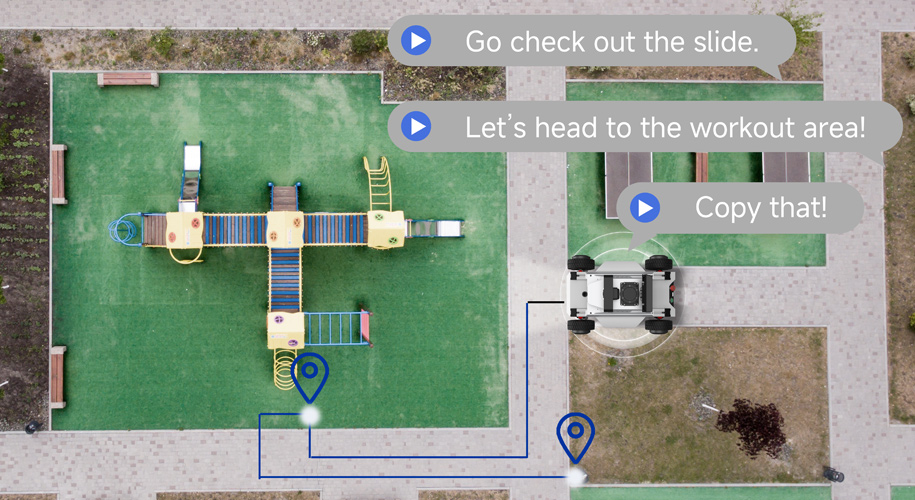

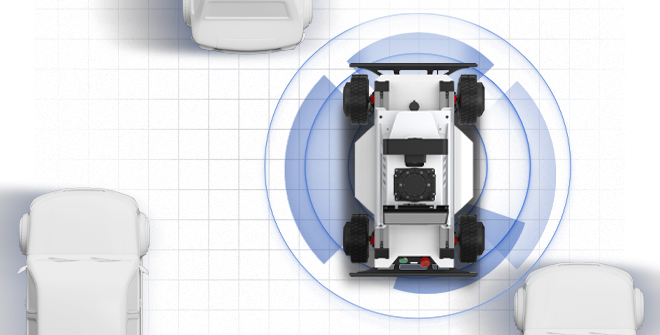

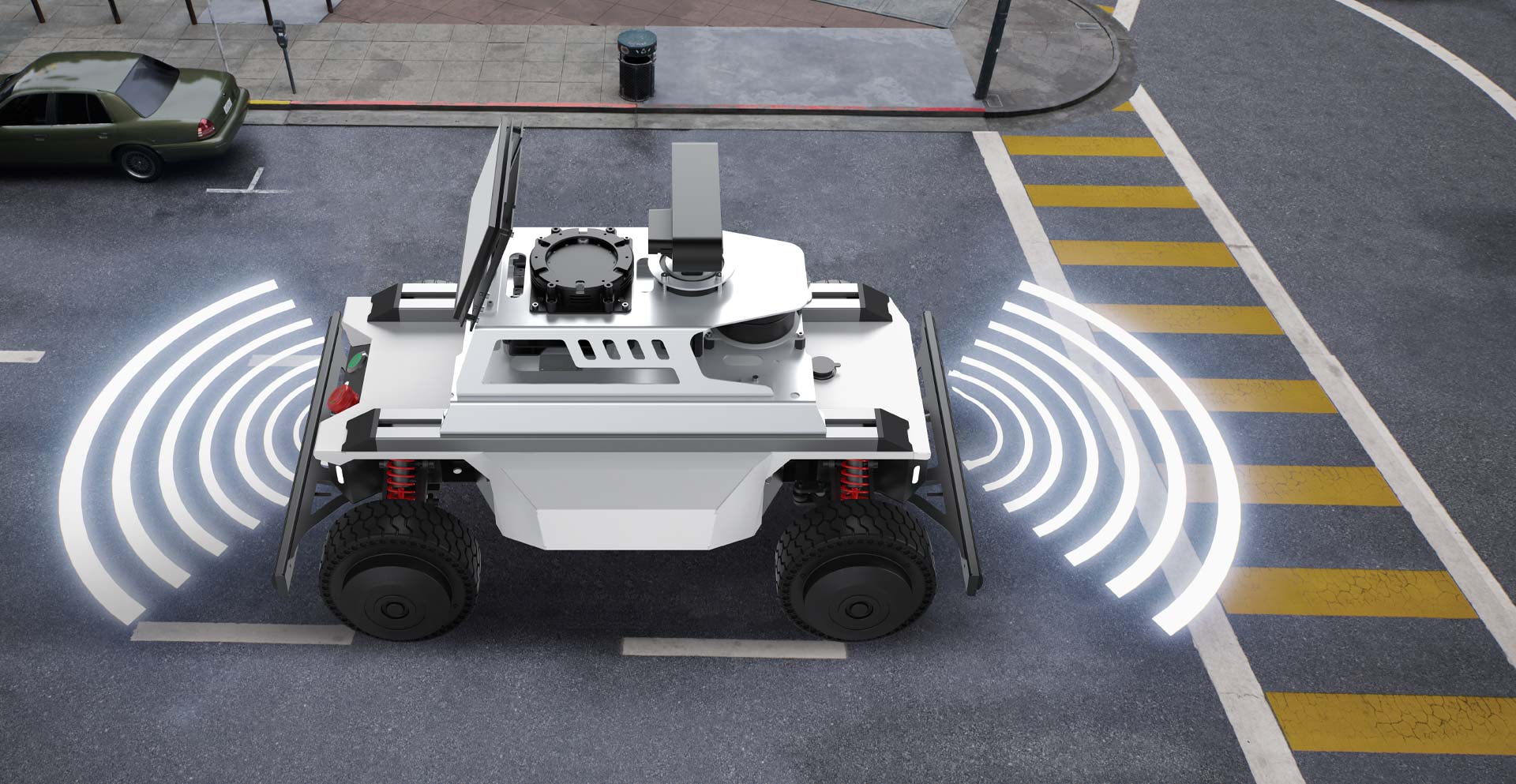

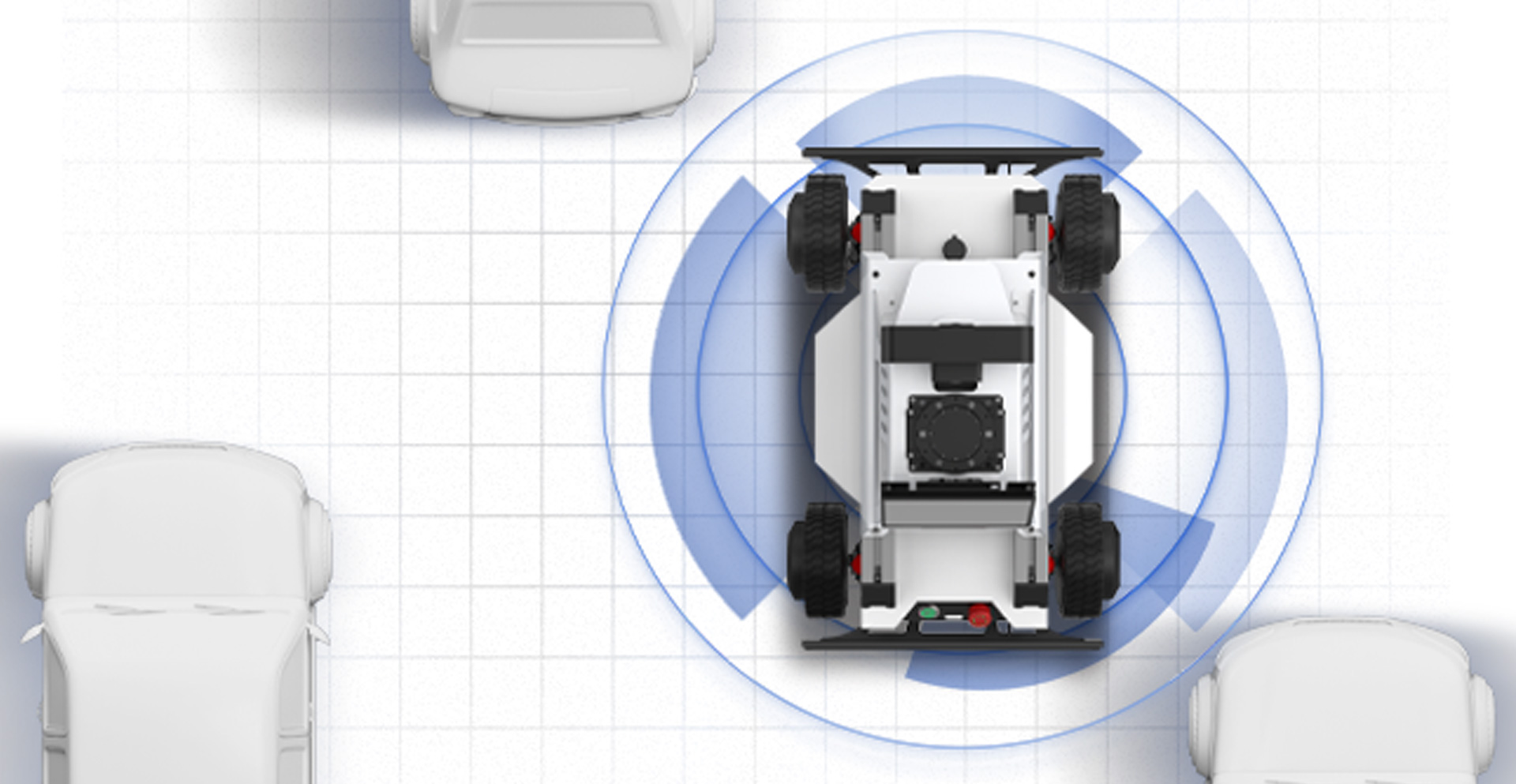

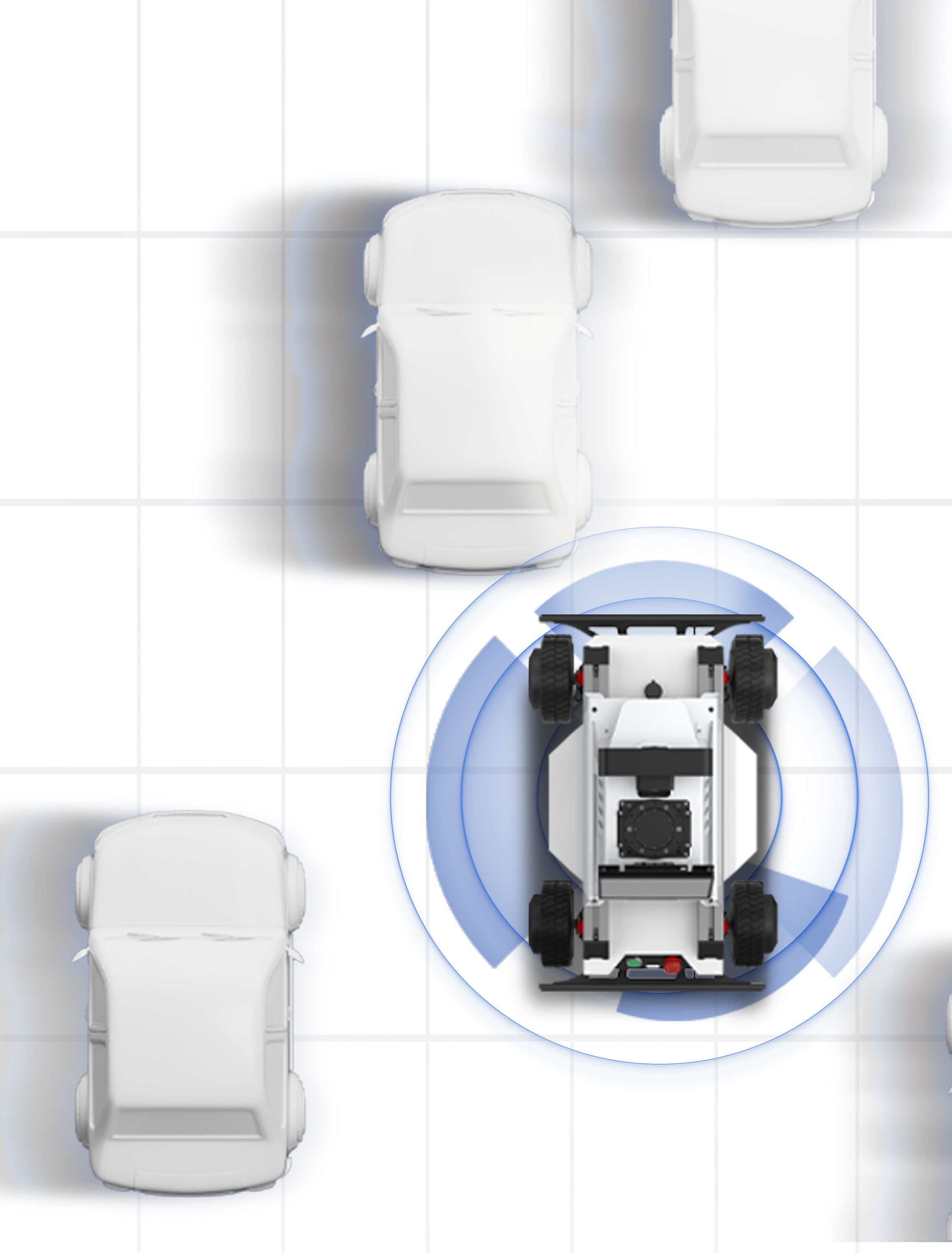

Fusion of multi-source sensing data and diverse algorithms supports navigation development and application in complex environments, laying a solid technical foundation for autonomous driving, robotics and enhanced navigation applications.

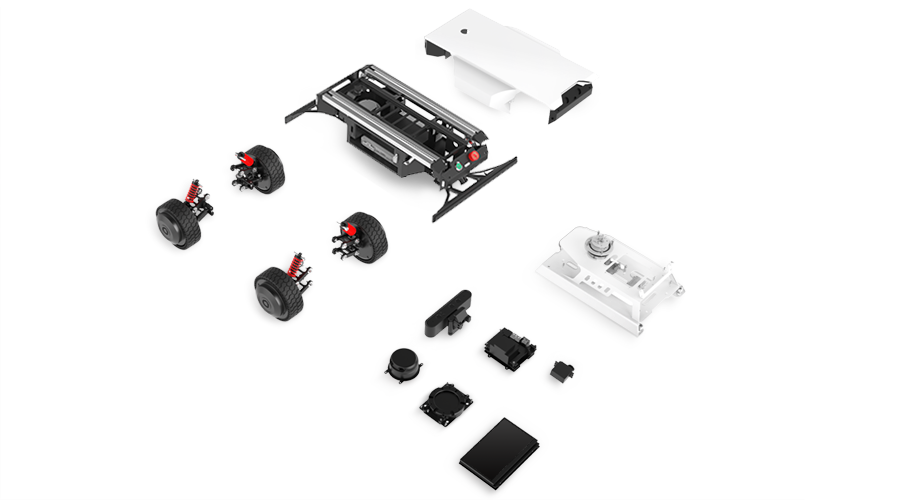

A full-process workflow covering hardware, algorithms, system integration, installation, and innovative research — designed to drive practical innovation and solve complex technical problems.

Powered by modular hardware, multimodal AI large-model deployment, and ROS ecosystem integration, OS-nano delivers an all-in-one robotics platform featuring AI algorithms, embodied interaction, autonomous navigation, and vehicle–road collaboration.

Lower Deployment Threshold for Multimodal AI Large Models, Enhance On-Device Training Efficiency, and Provide Comprehensive Support from Model Implementation to Application Validation

Hardware Entity + Multimodal Perception + Real-Time Action Control; Key support for agent-environment interaction intelligence development

Navigation Module & Modular Chassis Building. Full-cycle support from basic learning to in-depth innovation for autonomous navigation, scenario adaptation and function expansion.

Enable the implementation of vision-based autonomous driving, advance the vehicle-environment interaction capability, and provide end-to-end full technical development solutions.

Voice Control

Lane Recognition

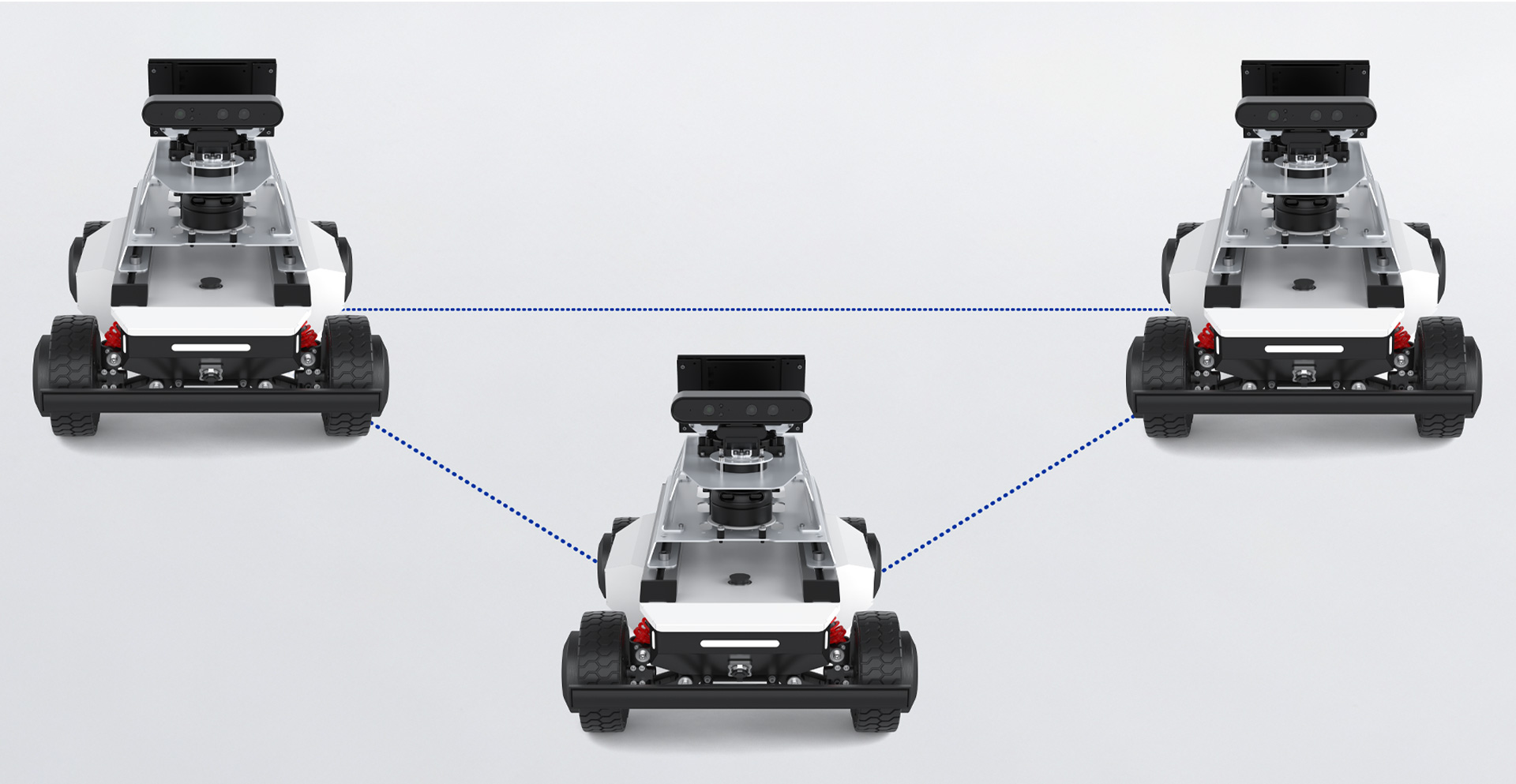

Swarm Control

Visual Navigation

Voice Control

Lane Recognition

Swarm Control

Visual Navigation

Development

Training

Education

Research

Support diverse modular applications and work with you to unlock new possibilities across industries.